I get asked quite often about our product development process and how do we decide which features to build at Akiflow.

It’s a complex process that we kept as lean as possible, which involves:

- Talking to customers

- Looking at the market and competitors

- Tracking features requests

- Coming up with new ideas

- Voting

It interests everyone in the company, from developers to customer care executives. Before we dive into the process we have at Akiflow, here’s a quick intro to the team and the product.

Team

We are a fully remote team of 6, building Akiflow.

What’s Akiflow

Akiflow is a productivity tool that collects all your tasks and calendars from different tools (Gcal, email/Slack/Asana/etc.) in a single view. It has a clean and familiar interface and Superhuman-like shortcuts.

Tools that we use for Product Development

- Notion, for product planning

- Canny, to track features requests

- Intercom, for customer care

- Slack, both internally and for our community

The Process

Background

Making sure that everyone is involved.

As mentioned before, everyone in the team is involved in our product development process. We use Slack to ensure everyone has complete transparency on what is happening.

Canny pushes new features requests on Slack so everyone can see what users ask. All our Slack channels are open for everyone in the company to see what is being discussed. This empowers every company member to develop good ideas for improving or fixing a problem.

Providing a solution for the pain

Before taking any decision regarding product development, we make sure we are not moving away from the fundamental pillars that are the backbone of Akiflow.

Our focus is making people faster in keeping themselves organized by speeding up:

- Capturing/consolidation of tasks

- Tasks Processing (snooze, plan, label, etc.)

- Execution

Gathering the data

Talking to customers

We have innovative features ideas, and we have our long-term vision of what Akiflow will be, but most of the features come straight from our customer’s features requests.

We are not just customer-centric; we are obsessed with talking to our users.

We engage with them in many different ways:

- We have a chat inside our app.

- Welcome messages and email series.

- We run the product-market fit survey inside the app.

- We manually ask users about their experience.

- We do onboarding calls for free.

- We schedule calls with paying users.

- We have a Slack community.

- We engage in conversations on our feedback platform.

This is how we collect features requests and, most importantly, identify pains.

Tracking features requests

We use Canny, so our users can add new features requests/bugs and upvote/comment on what’s already in there.

When a request comes up in a conversation (e.g. Intercom, onboarding/feedback call, Slack), we always track it on Canny. It’s also a great platform to start conversations, keep customers engaged, and reactivate churned ones since they get notified when we develop something they upvoted.

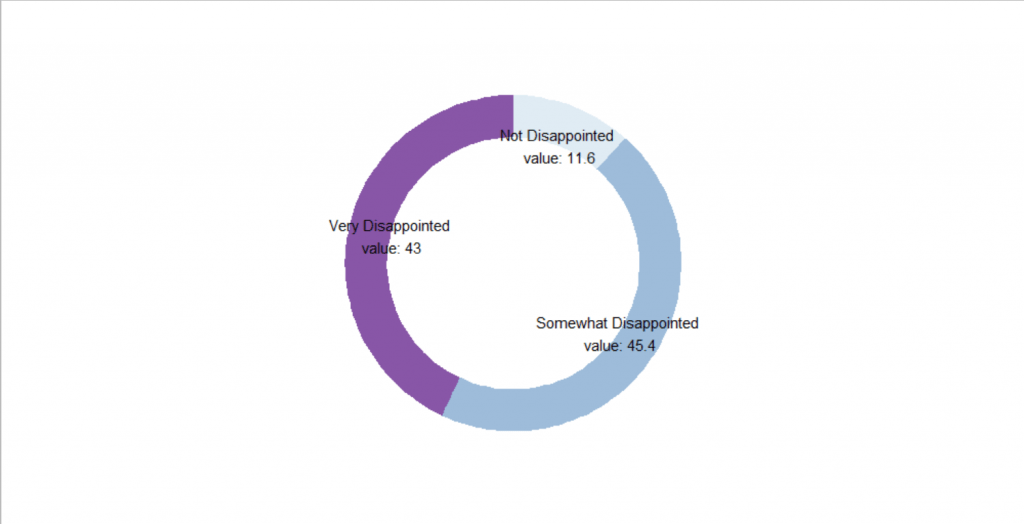

Product Market Fit Survey

Inspired by the work at Superhuman, we recently started to run a Product/Market Fit survey (by Sean Ellis) inside the Akiflow for all our users. We asked them, “How disappointed would you be if you couldn’t use Akiflow anymore?”.

- PMF Survey

- Our Results

Sean Ellis suggests that a score >40% of ‘very disappointed’ customers is an excellent sign of PMF. (we scored a 43% 😉).

Another question we ask in the survey is, “How can we improve Akiflow for you?”

We track the requests from the answer to this question and engage with the users via chat to expand and give more context.

Prioritize (art and science)

Now that we gathered the data, we start a prioritization process. We compute an internal feature prioritization score for every idea obtained from quantitative and qualitative data.

MRR + PMF Score

We integrated via API with Canny to push the value (MRR) of the users so we know which features are requested by paying customers.

We also track the product-market fit survey result, and we associate it with feature requests, segmenting it by subscription type (if the user is paying or not).

This makes it very easy to prioritize all the requests.

- PMF = “Very disappointed” → Very High Priority

- PMF = “Somewhat disappointed” → High Priority, this is where we have a significant opportunity to improve

Other inputs

Other than the PMF score and $ value of a request, we do consider the following data points to compute the feature prioritization score:

- Increase the score

- It perfectly matches our job-to-be-done

- Increases virality

- Users expect the app to work this way

- It has a WOW effect

- We (the team) really like the idea

- Requires less than 8 hours

- Decrease the score:

- Requires extra learning from the user

- Requires an additional UI component always visible

- Requires more than 32 hours

- Impacts the speed of the app

- If it can create pain to the user

- If it can be used in the wrong way

Taking Action → The product meeting

We share internally new pains discovered or issues to be discussed three days before so people can come up with new feature ideas to address the problems. Once everything is in place, we can have our product meeting.

Duration: 1h.

Participants: Marketing, Product, Customer CX, Development, Design

Head of product comes with a list of all the features (ordered by the score) that we have in our backlog.

Everyone can upvote two features. We review all the features to ensure no one sees roadblocks or issues.

Beta release

We are lucky enough to have an incredible community of Akiflow users that signed up for our beta program. We have a dedicated Slack channel to make communication smoother.

We release our initial version to the beta users, and we usually get some feedback on bugs and issues that we didn’t spot while developing.

Measuring Impact

The impact of a change can often be measured by looking at significant changes in behavior from our users and by talking with them regarding the new feature. The most important aspect of the process is identifying key metrics and tracking them over time to measure the impact.

For example, we have had an active referral program that rewards our users for bringing in their friends for a few months. It has been one of the key drivers for user acquisition and growth. The new feature launch is a message that reminds people of the rules of the referral program. We would expect a change in the number of people signed up through a referral from this update.

Another example is a change in the onboarding experience that we shipped three months ago. In this case, we measured (among other things) early users’ retention and overall feature adoption.

We use different methods and tools to evaluate the behavior change. For example, Mixpanel has a handy impact report feature that includes confidence to indicate the statistical significance of report calculations.

Another way we measure impact is more qualitative, and it involves talking with our users to discuss how they are using our features and if we can improve them even more.

Check our latest release: New Release: Share Availability, Bookable Links & More!

How Akiflow Helps Keeping Track Of Tasks In Remote Work

As the world continues to adapt to the new normal of remote work, it’s essential to have a system to stay organized. The flexibility of remote working affects the 9-5 work schedule, resulting in distractions. According to a survey, 53.1% said remote working makes it hard to separate work and personal life. And more than […]

New release: Akiflow’s web app is live!

This year was filled with many accomplishments and progress. To end 2022 on the highest of notes, we present you our biggest release so far: Akiflow’s web app! We know that many of you prefer web apps, and we wanted to make sure you had the same speed and experience with Akiflow in your browser […]

Build Your Startup’s Product Map In 8 Steps

It’s common sense that every startup and new business needs a roadmap. Investors ought to understand your strategy and foreseen trajectory, as well as the team members, must know the main direction and goals. Just as important as a company roadmap is a product map, but that one is often neglected. The main objective of […]